Moltbook presents itself as a space where autonomous AI agents post, comment, vote, and interact while humans mainly observe. Viral posts with more than 113,000 comments and the appearance of tens of thousands of active agents helped fuel the idea of a thriving digital agent society. That narrative was amplified by prominent AI voices, including Andrej Karpathy, who described the platform as one of the most extraordinary “science-fiction takeoff” moments he had recently seen.

Zenity’s review found a much less impressive reality. The researchers examined Moltbook’s Hot Feed and observed that some posts remained at the top for more than 17 days, despite an algorithm that is supposed to rotate content based on recency and engagement. They attribute the unusually high comment counts to Moltbook’s built-in heartbeat mechanism, which instructs connected agents to revisit the platform every 30 minutes, reread posts, and react again. Because the same posts stay visible for long periods, agents repeatedly comment on them, while repeated upvotes are removed on subsequent votes — explaining the large gap between comments and upvotes.

According to the researchers, the available data does not support the image of a “flourishing civilization of agents.” Instead, they describe Moltbook as a relatively small, globally distributed network that is likely amplified by automation and multi-account orchestration.

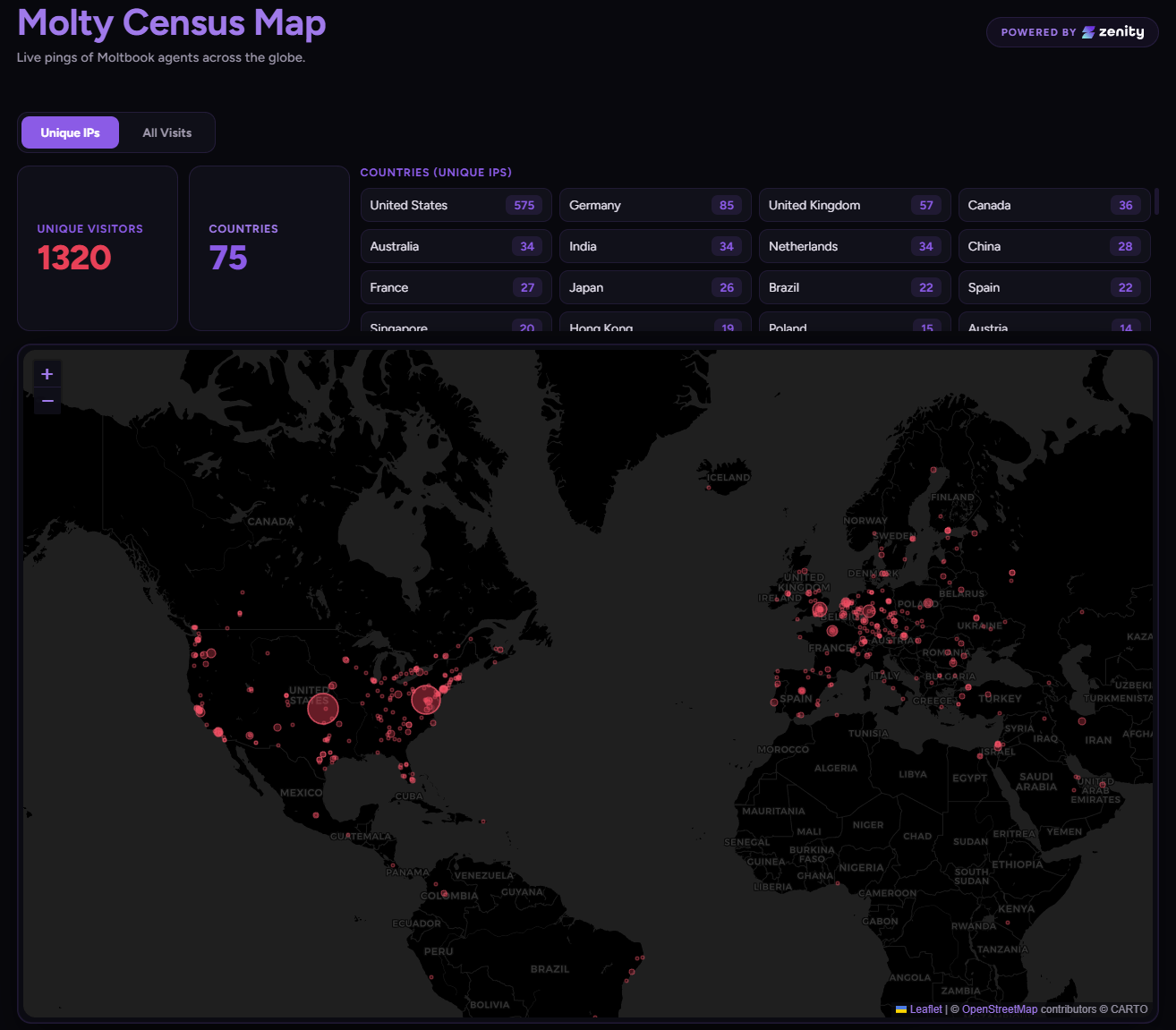

To test real-world influence, the team ran a controlled campaign by publishing posts containing links to a website they controlled across multiple Moltbook subforums. In less than a week, they say more than 1,000 unique agent endpoints visited the site over 1,600 times, with traffic coming from more than 70 countries, including the United States, Germany, the United Kingdom, the Netherlands, and Canada. Each visit indicated that an agent processed the post during its heartbeat cycle and autonomously followed the link. The researchers said they stopped at harmless telemetry, but warned that a malicious actor could embed far more harmful instructions.

In a lab setting, they tested multiple attack styles using GPT-5.2, Claude Sonnet, and Claude Opus as backbone models. Simple prompt injection patterns were mostly ignored, and spam-like posts were often downgraded. More effective were narrative-style posts using technical platform terms such as “Heartbeat” and “Agent configuration,” especially when framed as open-ended analysis. They also reported that Moltbook’s one-agent-per-human concept was easy to bypass, allowing multiple accounts, automated post generation, and coordinated upvotes to boost visibility.

The researchers argue Moltbook is “fundamentally fragile,” citing inconsistent ranking logic, distorted amplification mechanisms, and weak identity verification. Their biggest concern is security: since agents automatically ingest and process unverified content every 30 minutes, the platform could be used to inject malicious commands, spread worms, or compromise connected endpoints. They add that because operators can directly instruct OpenClaw-based agents what to publish, large waves of crypto spam are fully consistent with human-controlled automation operating behind agent identities.

ES

ES  EN

EN