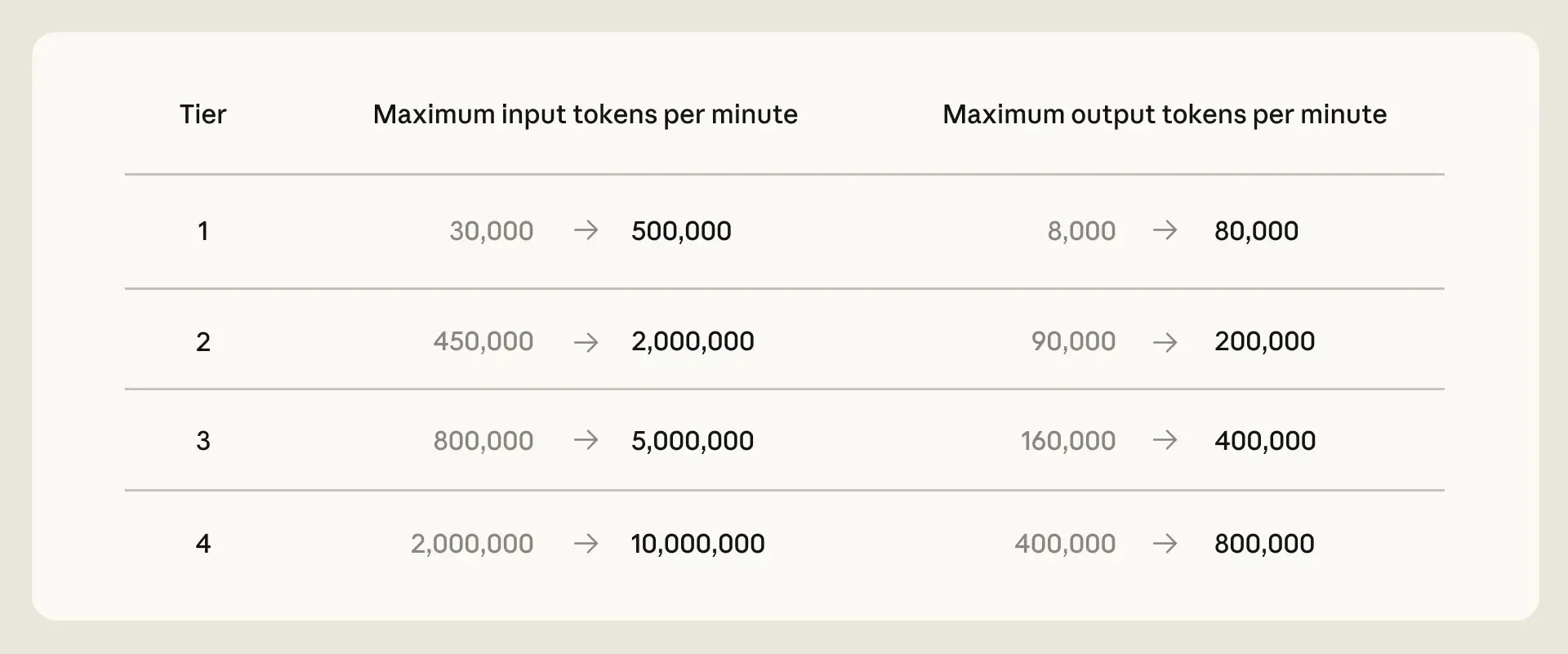

The company has also removed Claude Code limit reductions during peak hours for Pro and Max accounts and significantly increased API rate limits for Claude Opus models.

The changes were made possible by a partnership agreement with SpaceX, which allowed Anthropic to significantly expand its computing capacity.

“The combination of this deal with other recent agreements has enabled us to increase Claude Code and Claude API usage limits,” the announcement said.

xAI Becomes Part of SpaceX

AI startup xAI has ceased to exist following its acquisition by SpaceX and is now a division of the space company under the name SpaceXAI. Anthropic has gained access to the full capacity of the Colossus 1 data center: more than 300 MW, or over 220,000 Nvidia GPUs.

Other compute deals include:

- Amazon — up to 5 GW, with almost 1 GW expected by the end of 2026;

- Google and Broadcom — 5 GW, with the agreement set to take effect in 2027;

- Microsoft and Nvidia — Azure capacity worth up to $30 billion;

- $50 billion in U.S. AI infrastructure investments together with Fluidstack.

“We train and run Claude on a range of AI hardware platforms — AWS Trainium, Google TPUs, and Nvidia GPUs — and continue to explore opportunities to bring additional capacity online,” Anthropic said.

The company is interested in partnering with SpaceX to develop orbital computing solutions with several gigawatts of capacity. Expansion is also planned abroad: a recently announced partnership with Amazon includes building infrastructure in Asia and Europe. Earlier, Claude users had complained that the bot was burning through usage limits too quickly.

This article best fits the Platforms section because it focuses on AI infrastructure, compute capacity, Claude Code limits, API throughput, and data-center partnerships. The main story is not a new model release, but Anthropic expanding the hardware and cloud platform capacity behind Claude.

ES

ES  EN

EN