Anthropic, he said, continues to adhere to two red lines: no mass domestic surveillance (which implicitly suggests that large-scale foreign surveillance is considered acceptable) and no fully autonomous weapons.

Amodei emphasized that Anthropic was the first AI company to deploy its models within classified government networks, national laboratories, and for security-focused clients. Claude is already being used extensively for intelligence analysis, simulations, operational planning, and cyber operations.

On autonomous weapons, Amodei argued that today’s AI systems are simply not reliable enough to remove humans entirely from the decision-making loop. Anthropic offered the Pentagon joint research on improving reliability, but that proposal was rejected.

Regarding domestic surveillance, Amodei warned that AI could automatically and at scale combine fragmented, individually harmless data points into comprehensive profiles of every citizen.

He also pointed to what he described as contradictory actions by the Pentagon: labeling Anthropic a supply-chain risk while simultaneously treating it as indispensable to national security under the Defense Production Act (DPA). According to Amodei, these positions are mutually exclusive. Despite the pressure, Anthropic does not intend to change its stance. If the Pentagon removes Anthropic from its systems, the company says it will facilitate a smooth transition to another provider.

Anthropic has also foregone several hundred million dollars in revenue by cutting off access to Claude for Chinese companies with ties to the Chinese Communist Party, and it actively supports strict export controls on advanced chips.

Here, Anthropic is clearly also acting in its own interest: chip export controls weaken Chinese competitors, and allowing Chinese firms to use Claude to develop their own models would undermine Anthropic’s long-term business. Forgoing this revenue therefore also serves as a form of self-protection.

Pentagon concessions are not enough for Anthropic

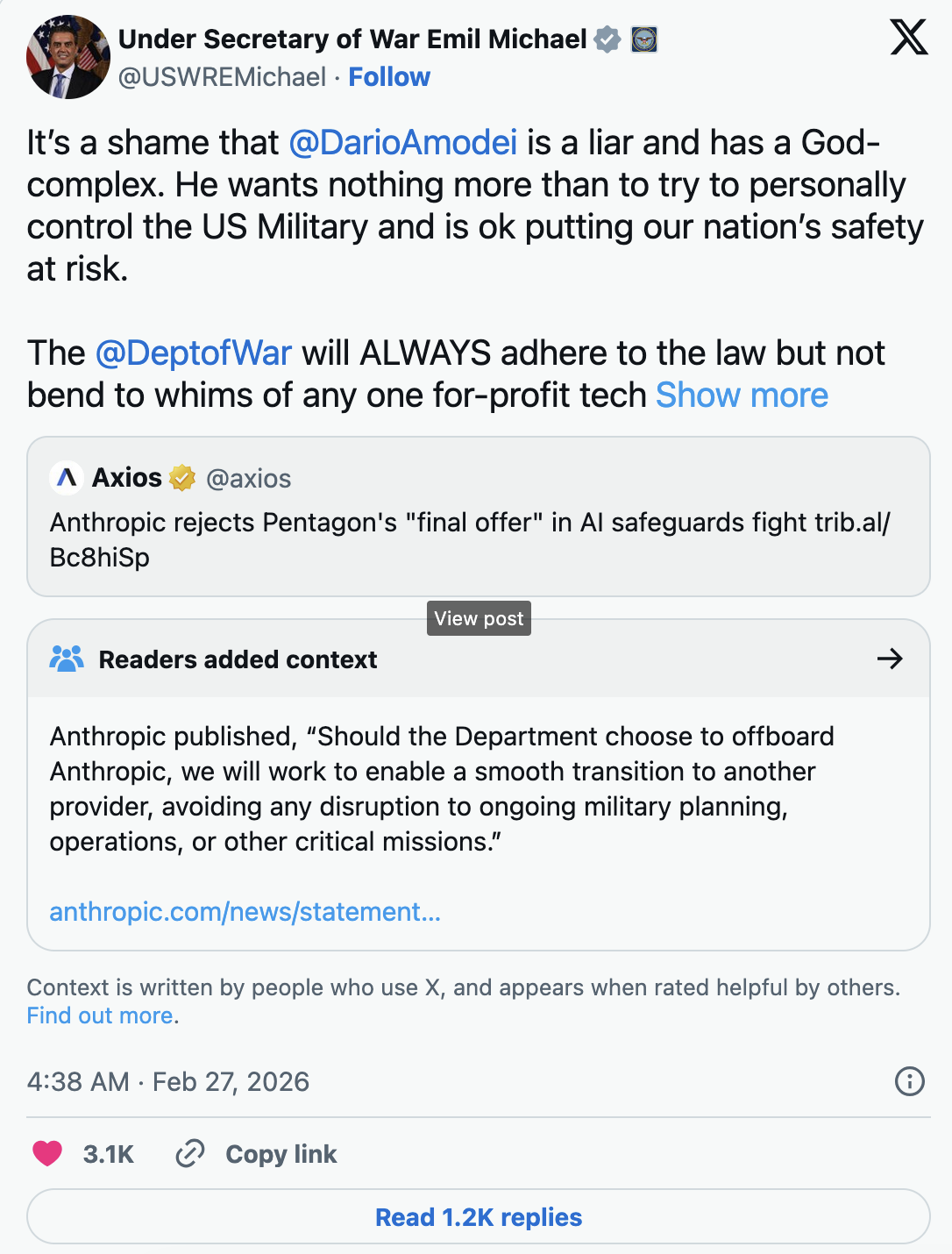

Pentagon Chief Technology Officer Emil Michael told CBS that the military had made “very good concessions.” According to him, the Pentagon offered to formally acknowledge existing laws against domestic surveillance and existing Pentagon policies on autonomous weapons in writing. It also offered Anthropic a seat on the military’s AI ethics council.

Anthropic, however, deemed these concessions insufficient. In response, Michael called Anthropic CEO Amodei a “liar” with a “God complex” on X.

When asked why the Pentagon would not explicitly guarantee that Anthropic’s models would not be used for mass surveillance or fully autonomous weapons decisions, Michael said this was already prohibited by existing law and Pentagon policy. “You have to trust your military to do the right thing,” he said.

At the same time, he stressed the need to prepare for the future and for China’s use of AI: “We will never give a company a written assurance that we cannot defend ourselves.” Anthropic’s deadline expires on Friday at 5:01 p.m.

Can the Pentagon force Anthropic to build “WarClaude”?

Legal scholar Alan Z. Rozenshtein analyzed on Lawfare what the Pentagon can actually compel under the Defense Production Act. The law dates back to the Korean War and grants the president broad powers to force companies to deliver goods in the interest of national defense.

According to Rozenshtein, the legal assessment depends on what exactly the Pentagon demands. Two scenarios are conceivable. First, the Pentagon could require Anthropic to supply Claude without contractual usage restrictions—that is, the same model, but without clauses prohibiting mass surveillance and autonomous weapons. In that case, the government would have relatively strong arguments, since the product itself would remain unchanged.

Second, the Pentagon could demand that Anthropic retrain Claude entirely and remove the safety constraints from the model itself. This would be far more difficult to enforce, Rozenshtein argues, because it would effectively constitute a new product. It is legally unclear whether the DPA can force companies to create products they do not otherwise offer.

A forced retraining would also raise First Amendment issues: if training decisions are considered editorial judgments, the government might be compelling Anthropic to express values the company rejects.

Rozenshtein highlights a core contradiction: the Pentagon cannot simultaneously classify Anthropic as a security risk and treat it as indispensable to national defense under the DPA. Refusal could expose Anthropic to criminal penalties. The most likely path, he suggests, would be to comply under protest and immediately challenge the order in court.

ES

ES  EN

EN