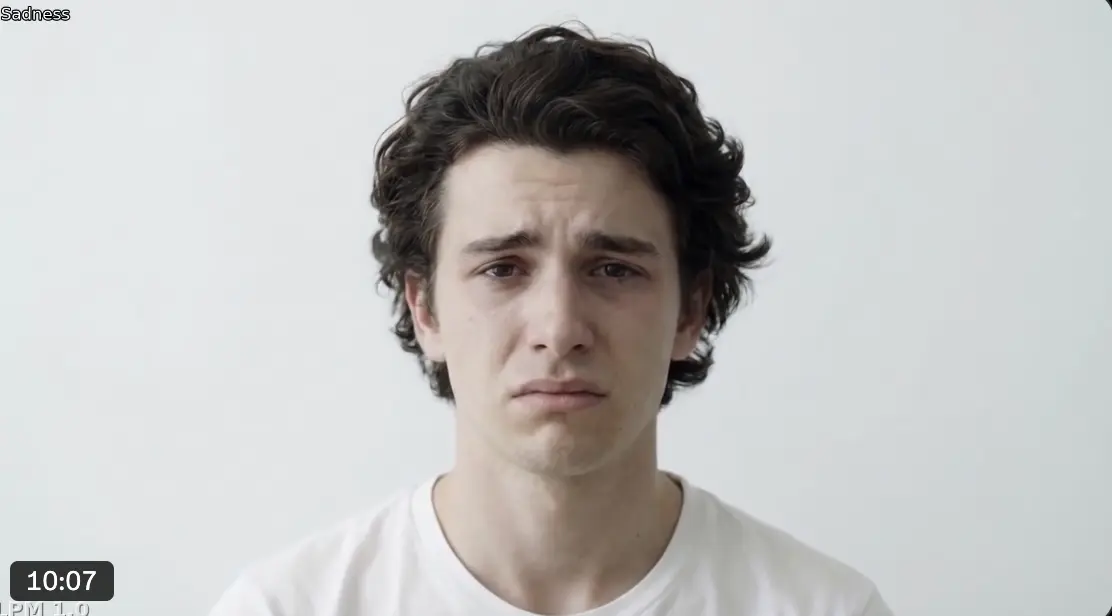

The model processes text, audio, and reference images at the same time, producing lip-synced speech, subtle facial expressions such as hesitation or gaze shifts, and smooth emotional transitions. It can also be connected directly to speech-based AI systems like ChatGPT or Doubao, enabling a real-time visual conversation partner.

LPM 1.0 works across multiple visual styles — including photorealistic faces, anime characters, and 3D game-style avatars — without additional training. Rather than rendering a full video in advance, it generates video as a real-time streaming process. According to the researchers, it can remain stable for videos lasting up to 45 minutes.

Technically, LPM 1.0 relies on a multi-stage identity conditioning approach. In addition to a main image, the model receives reference images from different angles and with different facial expressions. This allows it to preserve details such as teeth, wrinkles tied to specific emotions, or side-profile features, instead of inventing them on its own.

The model supports three conversational states. In listening mode, it generates reactive facial behavior such as nodding or shifting eye contact based on incoming audio. In speaking mode, reply audio drives lip movements and body language. During pauses, LPM uses text instructions to produce natural idle behavior.

Beyond live conversations, project lead Ailing Zeng says LPM 1.0 also supports offline video generation from pre-recorded audio, making it suitable for podcast visuals, film dialogue, and other content creation use cases beyond chat interfaces. Video-based control is not included in this version, but Zeng says it would be technically possible within the framework.

For now, it remains a research project

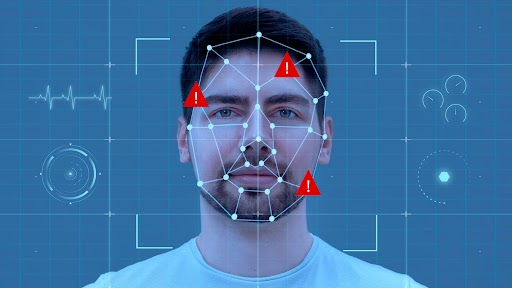

The development team stresses that LPM 1.0 is strictly a research project. They do not plan to release model weights, code, or a public demo. All faces shown in the project materials are AI-generated and do not depict real people. The researchers also acknowledge that the generated videos still contain visible artifacts, and their quantitative analysis confirms that there is still a gap between LPM output and real video quality.

The team further says it wants to develop AI responsibly and would only consider access under sufficient safeguards and clear usage frameworks. More details are available on the project page and in the technical report.

Even though LPM 1.0 is still only a research prototype, it points to where this technology is heading: AI systems may soon appear not just as text or voice, but as visually convincing characters capable of facial expression, eye contact, and emotional response. That could open up applications in education, gaming, customer service, and virtual companions.

At the same time, the technology carries clear risks, as it comes very close to enabling real-time deepfake infrastructure and could be misused for fraud, manipulation, or impersonation of real people. These harms already exist; what continues to fall is the barrier to entry. The researchers explicitly state that the system is not intended for deception, impersonation, or misleading representations of real individuals.

ES

ES  EN

EN